When to Stop Believing

Reflections on Belief-Poisoning in Food and Farming

I’ve heard that when you’re asleep, your brain literally can’t tell the difference between a dream and reality. That’s why it’s so critical for your body to release a paralytic hormone, to make sure you stay in your bed while you sleep, and not get up and act out your dreams. When this paralysis fails, it can cause huge problems (that can be both heartbreaking and hilarious). When the paralysis doesn’t lift quickly enough, many have the terrifying experience of a demon sitting on their chest.

I once watched a documentary about what we know about dreaming, and it was fascinating for the information it lacked. In fact, we don’t actually know that much about why we dream. Even sleeping itself is a bit nuts when you think about it.

“Ah well, the sun has set and predators are awakening, I’m off to voluntarily lose consciousness for six to eight hours, leaving my body incredibly vulnerable. Why, you ask? Because I’ll die if I don’t. Yes, even though usually losing consciousness is a very bad sign for my health. And yes, my brain will go on processing and functioning throughout the experience, I’ll just have no real control over it. And yes, sometimes it is terrible. Well, good night!”

It always struck me how many uncomfortable similarities there are between dreaming and believing. After all, to really believe in something is also a risky thing to do. It makes us vulnerable. Belief engages our brains and yet is difficult to control rationally. It can be paralyzing, and sometimes, it goes terribly wrong. This is true because to really believe in something is to be obligated to act, all the time, as if it were true. In that way, to our brains, a true belief, like a dream, is indistinguishable from reality. From the truth.

I’ve never been a reliable listener to This American Life (more of a RadioLab girl, myself), but there is one episode I think about a lot. It starts with a woman in her 30s at a party, and ends with her finding out that unicorns are not real.

This isn’t some crank belief. By odd happenstance, this woman had just, at some point in her early life, internalized the idea that unicorns are real. She thought they were rare, surely, she’d never seen one, but she’d never seen a white rhino or a polar bear either. She thought unicorns lived on the savannas of Africa, and she’d never been directly disabused of the notion. So when she ended up at a fancy party objecting to the idea that unicorns were mythological, a friend had to pull her aside and ask if she was making a really odd and earnest joke. But she wasn’t, she was learning that she had simply been wrong about this silly, unimportant piece of information for her whole life.

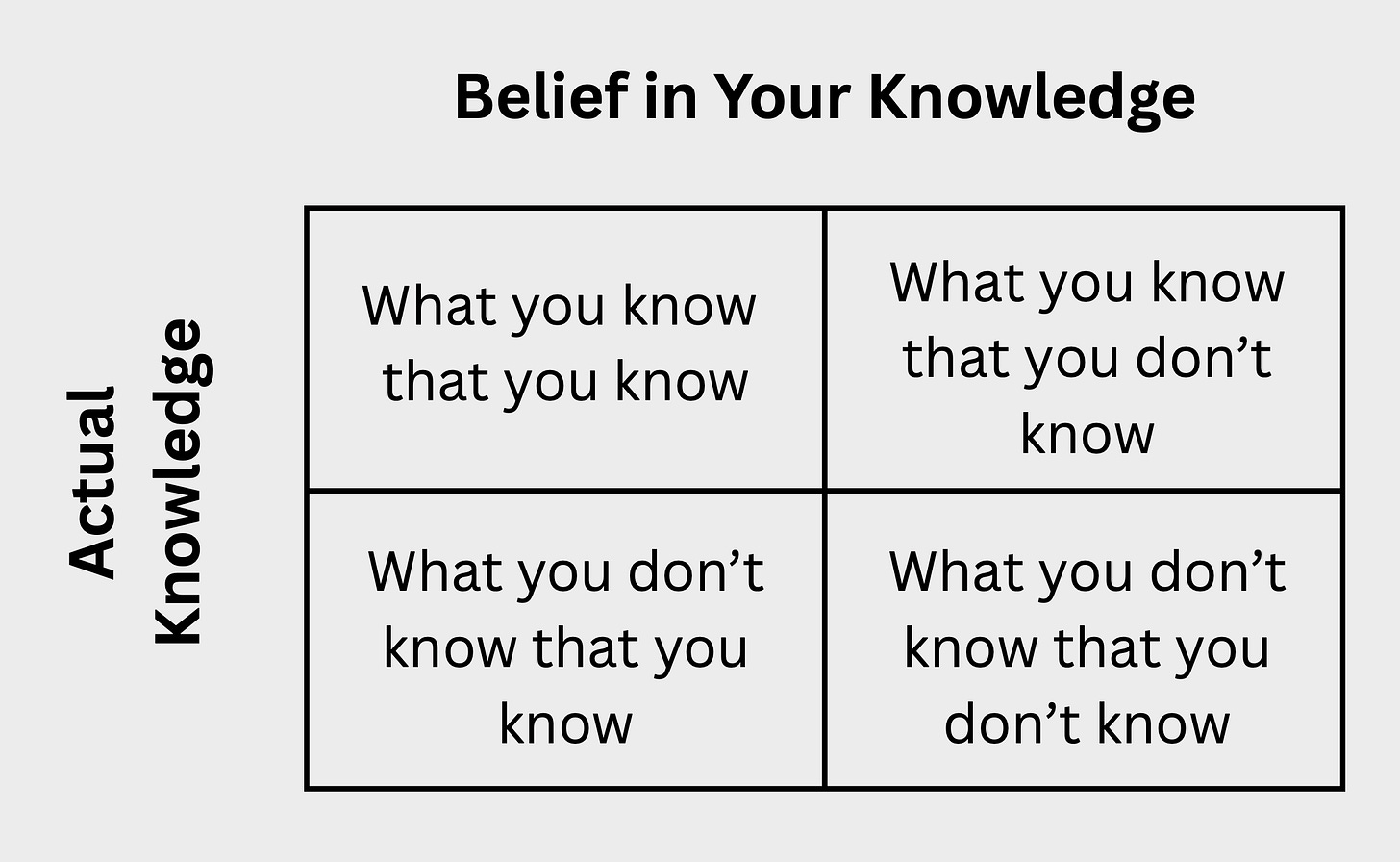

This introduces an important question about the things that we do and don’t know. One helpful way to understand and categorize these two different things is through a “knowledge matrix.”

Think of a simple two-by-two matrix. The top left box is “Things you know that you know.” In other words, information you know and that is accurate. I know my own name, for example, and I know with an extraordinary degree of certain that that knowledge is accurate.

Next to that box is “Things you know that you don’t know–” facts you know are out there, but you don’t know them. Think, the square root of 3,458. This number does not live in my brain, but I know that it is knowable.

Below the first box is, “Things you don’t know that you know.” This is the intuitive box, knowledge that you might not consciously believe you possess, but given the right prompting, you can produce it. The lyrics to a song you haven’t heard in decades is one example, another is “How to make chocolate chip cookies.” It turns out most people who have ever made chocolate chip cookies before can, off the top of their heads, bring to mind a workable recipe.

The final box is the most interesting. “Things you don’t know you don’t know.” This is the trickiest kind of knowledge. It is the 30 year old who thinks the African savannas are rife with unicorns. It’s those tidbits of information that we have in our big, dense, complex brains that have slipped past our internal and external falsehood detectors, and have become indistinguishable from knowledge. There are actually tons of common examples of things we “don’t know that we don’t know.”

For example, though most people in a survey group report, “knowing how a bike works,” most people could not draw a functional bicycle. Most people in the group reported knowing how a toaster works, how a microwave works, how a blow dryer works, but then when prompted to actually explain the workings of these simple machines, could not accurately explain any of it.

I heard an interview once, with a scientist who’s done a ton of work around how we understand what we do and don’t understand. At the end of the interview, he was asked, “how much of the average brain, in your estimation, contains incorrect information, or things ‘we don’t know we don’t know.’” His approximate guess– about 10%. That’s one in ten pieces of information in the average brain that, despite seeming accurate, might just… not be.

The interviewer also asked, “How can we tell the difference between information we know and information we don’t know that we don’t know?”

“Frankly,” the scientist replied, “beyond honest and rigorous interrogation, there’s no way to tell. Knowing feels like knowing, whether what you ‘know’ is true or not.”

So what?

Though this kind of “knowledge” is not quite the same as belief, they share that one important characteristic– they feel the same. But the differences are important too. Believing that unicorns are real is simply an uninterrogated idea. A quick google search (or embarrassing talk with a friend at a party) will probably set you straight.

True belief is trickier than that. It’s more like dreaming. Belief usually involves some amount of knowing, and perhaps even some awareness that it might not be true. A belief is often formed when a person considers some quantity of evidence, makes a determination that the outcome is at least somewhat inconclusive, and then chooses an outcome based not on the objective strength of the evidence either way, but because of its subjective strength, to them personally. It’s the choosing, I think, that causes a kind of paralysis, that makes belief such a rich cocktail to imbibe. It’s a mixture of information and choice, and the combination raises the stakes of being wrong. After all, it’s one thing for the facts not to add up, it’s another thing to indict our decision-making, to suggest that we, ourselves, were the ones that came up short. That’s how belief becomes indistinguishable from the truth– because we want it to be true.

Don’t get me wrong, sometimes this ability of ours to manufacture belief is almost miraculous. To me, it’s why when we tell one another, “I believe in you,” it’s so wonderful. It indicates that, “You’re not a sure thing, but I care about you, and therefore I’m choosing to attach myself, my conviction, and my ability to make sense of the world to your [success, achievement, ability to overcome odds].”

Sometimes though, belief can be, generously, problematic. I come across this in agriculture a lot, something that might be called “beliefism” or “belief poisoning.”

Here’s how it sometimes looks:

“Hi Sarah. Thanks for your writing about the faulty economics of small scale farming in America. But can’t you acknowledge that if farmers would just plant organic, regenerative crops and focus on diversity and consumer health above all, that they can make hundreds of thousands of dollars an acre? There’s money to be made in farming if people would only put in a little effort and do it the right way. Why won’t you acknowledge that in your writing?”

Maybe this paragraph looks familiar, or sane, to you. Maybe it doesn’t. I’ve been getting a flavor of this paragraph about once a week for five years. I’ve done interviews and had private chats to this effect. I’ve read official dress-downs of my work that read just like this.

There was a time when I believed in responding to all these paragraphs as gently, thoughtfully, and open-mindedly as I could, and often invited the commenters to a call or meeting to discuss their perspective more. But what I learned from all the non-responses, the clapbacks, and the takedowns I would get in response is that folks who pen these paragraphs aren’t often writing out of genuine interest or curiosity. They are true believers, and they are writing only to put me on notice that they don’t appreciate my adding weight to the argument opposite their belief.

The email is not to announce that, “I’m beginning a process of introspection, and am genuinely looking for more information as I re-evaluate my belief.” The email is to say, “my belief will not be changed, I’m insulted you would dare to challenge, and if you don’t get on board with my belief, I’ll be writing you off as a dumb bitch.”

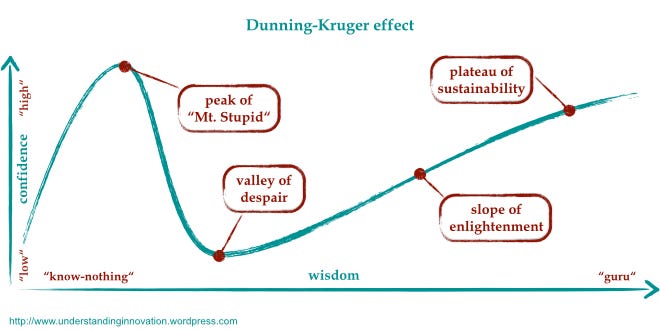

Agriculture is a really ripe area for beliefism. I think it’s because a lot of people have just enough experience with it to be overly-confident, and too little experience with it to actually know much. After all, most everyone has been to a grocery store. Everybody eats. A lot of people have been to the farmers market or have put a seed in a styrofoam cup or the ground. Many people have been to a farm (or a petting zoo). And what’s more, there’s something about agriculture that just seems intuitive, like all humans were just born able to do it, like having sex or giving birth. I think in a lot of people’s minds, farming is an innate ability, and therefore they have latent expertise they can call on whenever they like.

In that way, food and agriculture lend themselves very well to both beliefs and “things we don’t know we don’t know” (smarter people than me call this the Dunning-Krueger Effect).

And I think this phenomenon becomes especially pronounced at the intersections where food and ag cross with climate change and environmentalism. Most of the very strongest and most insane beliefists I’ve encountered live there. In beliefs like, “we can use agriculture to make landscapes healthier than they were before agriculture” and “companies have a financial incentive to protect the environment.”

On some level, I totally understand both of these beliefs. They seem wonderful and incredibly powerful. I mean, if agriculture can make land even healthier than it was before, then we never have to think about what’s the right amount of agriculture! And if companies will do environmentalism, than we never have to deal with our fucked up farm policy!

But these beliefs have not been interrogated. To the holders of the former, I would challenge that agriculture is, by definition, extractive. I’m sorry that that is true, but it is, especially modern agriculture. Unless a group of people grows all their own food on a given piece of land with no outside inputs, lives their whole lives on it, and returns all their waste to the landscape, including their dead body, then matter has been removed from the system. Matter cannot be created or destroyed. It can not be “regenerated” from nothing. Sure, missing inputs can be inserted from outside the system, but then you are “regenerating” one space while degenerating another. I’m not denying that “improving the health of land” is possible with good agricultural stewardship. Sustaining, too, is likely possible. But I think the idea that agricultural extraction can be a net benefit to a place is clearly a unicorn on the savanna, just one that people really, really want to believe is out there.

The idea that companies have a financial incentive to protect the environment is similar. It’s nice to believe that the biggest and most powerful organizations in our modern world have a fundamental rationale to pursue the common good. But that is not what businesses in a capitalist system do. Businesses maximize profit (though a few, I grant, also pursue public interest as a secondary goal). But let’s be honest, the vast majority of businesses, and all the very biggest, don’t give a flying fuck about the environment. To be frank, they barely care about next year. They mainly care about how much money they can possibly extract in the next 90 days. The idea that they have any other priorities or incentives is pure beliefism.

To me, the problem with this kind of beliefism is that it stalls out our progress on learning and finding real solutions. After all, why learn how a bicycle works when we believe we already know? Why learn about the mythological origins of unicorns– and why we invented them– if we maintain the conviction that they’re real?

I can acknowledge too that to part with a belief can be a painful thing. To acknowledge that we’ve attached ourselves to an idea that turned out to be wrong can be embarrassing, shame-inducing, and can even lead to existential crisis. If I was wrong about this, the realization threatens, what else could I be wrong about?

I feel these feelings all the time, and they suck. I often feel them when I get the paragraphs. I feel them when I read comments on my work. Sometimes I read people’s feedback, and I feel that creeping sense of dread. “What if I’m wrong and they’re right? What if I’ve been researching this stuff for more than a decade, and I still don’t have it figured out? What if I missed something? What if I am just some dumb bitch on the internet?”

And honestly, sometimes these feelings send me back to the drawing board, looking for more evidence, more data, more stories that might add more complexity, more layers, more paradoxes to this agricultural rats nest that I’ve been thrashing in all this time. And sometimes they turn to anger, and I end up writing a long essay about my experiences with this feedback, in which I try very hard not to strawman my critics, even though I believe that these people are mostly self-important cranks who know so little about food and ag that they can’t even comprehend how deeply they don’t know what they’re talking about. And sometimes they prompt me to log off, to touch grass, and to think about the real people in my life who think my fun facts are interesting and otherwise could not give two shits about what I do or don’t know about agriculture or anything else.

Despite all of this, I still believe in belief. I believe in a lot of other things too. That’s how I know that beliefs feel like truths, because my beliefs feel like truths to me. But I do try my best to at least label them as “beliefs.” It helps me remember that I need to keep checking on them, keep learning and wondering about them. Keep seeing if more information is available, or if my mind changed while I was learning, thinking, or dreaming about something else. I’m sure sometimes though, beliefs slip through, shake off their labels, and stand up among my knowledge. And that’s why I try to remember, when I get harsh feedback or mean emails, that yeah, I might be wrong. 10% of everything I think is probably wrong, and I have no way to tell which 10%, except by checking it. So I do that as well, and I try to be proud as I can be that I have, despite the pain, figured out how to change my mind and stop believing.

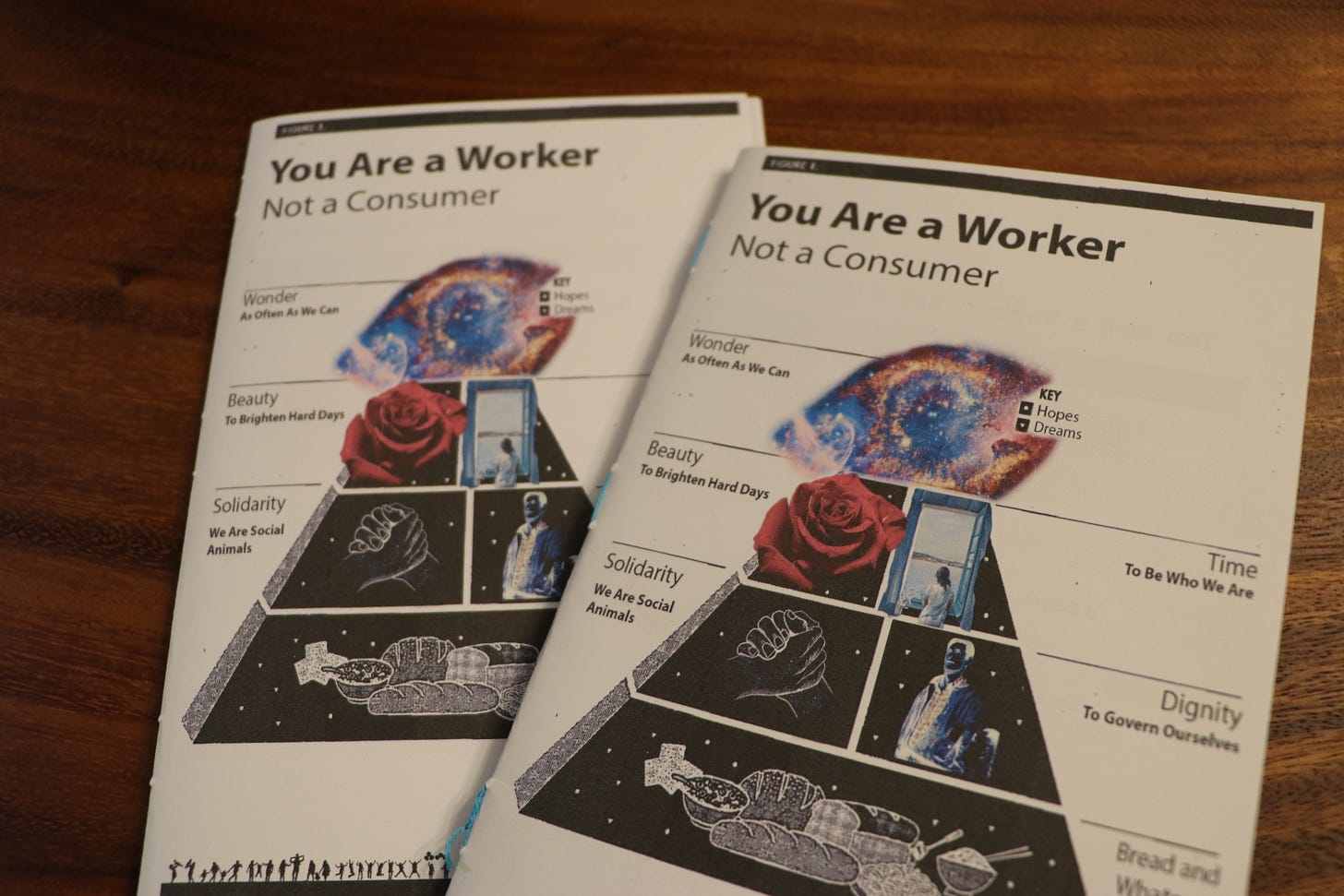

Thanks for reading! Retroactive programming note, I took the last two weeks off (haha). Hope you enjoyed an unannounced period of lightened inbox load. I’m back in my intense writing season, so things might become slightly more hit or miss for me here in the newsletter in the next few months. In the meantime, thanks so much for all of your support as always. And hey, new drops in the world of pamphs! Visit the website to check it the latest.

Nice post. I especially related to this: "I feel these feelings all the time, and they suck. I often feel them when I get the paragraphs. I feel them when I read comments on my work. Sometimes I read people’s feedback, and I feel that creeping sense of dread. “What if I’m wrong and they’re right?" Oh does that lack of certitude suck, but such is the call.

When you get to know a subject you realise how little you actually know. Even the simplest of activities require mind blowing amounts of knowledge to really understand. I always talk about humans learning to be less extractive, we can never become regenerative, we just have to do less harm.